Liquid Cold Plate Technologies: The Mandatory Thermal Architecture for 2026 AI Compute

As AI model parameters scale from the billions to the trillions, the thermal density of GPU clusters has obliterated the physical limitations of traditional air cooling. With modern compute racks breaching the 100kW threshold, engineering a robust liquid cold plate architecture is no longer optional—it is a critical infrastructural mandate. Integrating a high-performance custom heatsink or direct-to-chip liquid cooling system is the only viable engineering pathway to meet the stringent power efficiency targets set by upcoming silicon generations, including architectures highlighted at NVIDIA GTC 2026.

Quick Answer & Key Takeaways

For procurement managers and thermal engineers evaluating next-generation server cooling, here are the critical metrics driving the 2026 liquid cooling landscape:

Thermal Density Limits: Traditional air cooling peaks at 40kW per rack. Modern AI clusters demand 100kW–150kW per rack, necessitating direct-to-chip liquid cold plates.

PUE Optimization: Implementing liquid cooling drives Power Usage Effectiveness (PUE) down to 1.03–1.08, ensuring compliance with strict EU (PUE < 1.1) and global energy mandates.

Manufacturing Precision: Next-generation cold plates require CNC machining tolerances of ±0.02mm and utilize composite materials achieving thermal conductivities up to 400W/(m·K).

TCO Reduction: Despite higher initial CapEx, optimized cold plate systems lower the Total Cost of Ownership (TCO) to 85% of equivalent high-velocity air cooling systems.

The 100kW+ Rack Challenge: Outpacing Traditional Thermal Design

The paradigm shift in AI processing has fundamentally altered hardware thermal requirements. Legacy data center infrastructures were designed to manage thermal loads of approximately 8kW per rack. Today, high-performance computing (HPC) nodes and AI superclusters routinely operate between 100kW and 150kW, with emerging nodes pushing 200kW.

Traditional air cooling systems face an absolute physical ceiling at 40kW per rack. Attempting to force-cool higher density racks with air results in unacceptable acoustic noise, vibration, and a severe spike in PUE (often exceeding 1.6). To achieve the industry goal of “reducing energy consumption per kilowatt of compute by 30%,” direct liquid cooling mechanisms are strictly required.

Liquid Cooling Architectures: Why Cold Plates Dominate

The industrial thermal management sector classifies liquid cooling into three primary architectures. Among them, direct-to-chip technology remains the most commercially viable for OEM and enterprise deployment.

Liquid Cold Plate (Direct-to-Chip): Currently holding over 60% of the market share, this technology utilizes highly engineered metal blocks (copper or aluminum) with internal micro-channels. Coolant flows through the cold plate, directly absorbing the Thermal Design Power (TDP) of the CPU/GPU. It is highly favored due to its seamless integration with existing server architectures and zero fluid-to-electronics contact.

Immersion Cooling: Servers are fully submerged in a dielectric, non-conductive fluid (such as fluorinated liquids or synthetic oils). While it offers the highest theoretical thermal efficiency (driving PUE down to 1.03), it requires a fundamental redesign of data center infrastructure and specialized hardware handling.

Direct Spray Cooling: A hybrid approach where dielectric fluid is sprayed directly onto heat-generating components. It offers localized high-flux cooling but presents complex fluid recovery and maintenance challenges.

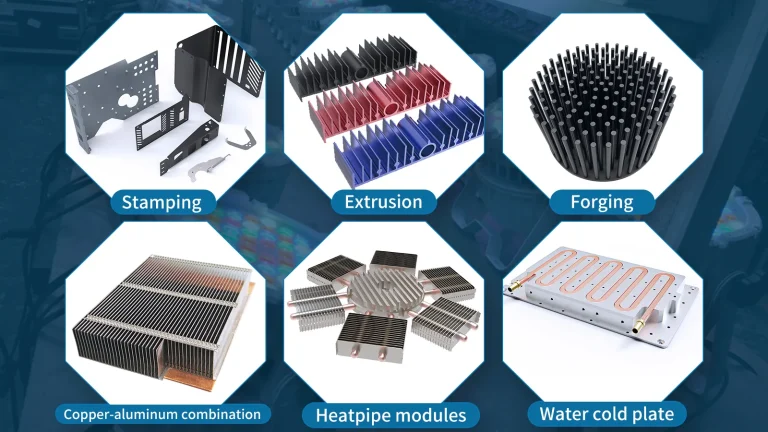

Core Component Engineering and Supply Chain

The global liquid cooling market is projected to exceed a $27 billion (200 billion RMB) valuation by 2026. The upstream supply chain is heavily dependent on the precision manufacturing of core components:

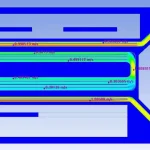

Micro-Channel Cold Plates: The thermal bottleneck lies in the fluid boundary layer. Advanced CNC machining and Skived Fin technologies are critical to increasing internal surface area without inducing severe pressure drops.

Coolant Formulations: The market is strictly divided between deionized (DI) water systems (requiring advanced anti-corrosion and biocide management) and dielectric fluorinated fluids, which currently hold a 45% market share in high-density applications.

Technical Breakthroughs in Custom Thermal Hardware

The primary barriers to liquid cooling adoption—cost, maintenance complexity, and leakage risk—are being rapidly dismantled by advances in hardware manufacturing:

Material Science Innovation: The development of composite cold plate materials has successfully pushed thermal conductivity to 400W/(m·K), effectively doubling the performance of standard extruded aluminum alternatives.

Zero-Tolerance Machining: To guarantee optimal thermal interface material (TIM) compression and zero leakage, top-tier manufacturing facilities now execute surface flatness and internal micro-channel tolerances to ±0.02mm.

Leak Mitigation: Smart quick-disconnects (QDs) and AI-driven pressure monitoring systems have reduced leak detection and containment response times to under 1 second.

Future-Proof Your Data Center Hardware

As global standards like IEEE P2859 solidify, and regulatory bodies mandate sub-1.3 PUE ratings for all new ultra-large-scale data centers, transitioning to liquid thermal management is a hard engineering requirement. Relying on off-the-shelf heat sinks will result in thermal throttling and critical hardware failure in next-generation AI clusters.

Ready to eliminate thermal bottlenecks in your high-density hardware? Partner with a seasoned thermal manufacturing facility. Submit your CAD drawings today to request a comprehensive thermal calculation, fluid dynamics assessment, and a rapid-prototyping quote for your custom liquid cold plates.